WebSphere Liberty

WebSphere Liberty Recipe

- Review the Operating System recipe for your OS. The highlights are to ensure CPU, RAM, network, and disk are not consistently saturated.

- Review the Java recipe for your JVM.

The highlights are to tune the maximum heap size (

-Xmxor-XX:MaxRAMPercentage), the maximum nursery size (-Xmn) and enable verbose garbage collection and review its output with the GCMV tool. - Liberty has a single

thread pool where most application work occurs and this pool is

auto-tuned based on throughput. In general, it is not recommended to

tune nor specify this element; however, if there is a throughput problem

or there are physical or virtual memory constraints, test with

<executor maxThreads="X" />. If an explicit value is better, consider opening a support case to investigate why the auto-tuning is not optimal. - If receiving HTTP(S) requests:

- If using the

servletfeature less than version 4, then consider explicitly enabling HTTP/2 withprotocolVersion="http/2". - For HTTP/1.0 and HTTP/1.1, avoid client keepalive socket churn by

setting

maxKeepAliveRequests="-1". This is the new default as of Liberty 21.0.0.6. - For servers with incoming LAN HTTP traffic from clients using

persistent TCP connection pools with keep alive (e.g. a reverse proxy

like IHS/httpd or web service client), consider increasing

persistTimeoutto reduce keepalive socket churn. - For HTTP/1.0 and HTTP/1.1, minimize the number of application responses with HTTP codes 400, 402-417, or 500-505 to reduce keepalive socket churn or use HTTP/2.

- If using HTTP session database persistence, tune the

<httpSessionDatabase />element. - If possible, configure and use HTTP response caching.

- If using TLS, set

-DtimeoutValueInSSLClosingHandshake=1. - Consider enabling the HTTP NCSA access log with response times for post-mortem traffic analysis.

- If there is available CPU, test enabling HTTP response compression.

- If the applications don't use resources in

META-INF/resourcesdirectories of embedded JAR files, then set<webContainer skipMetaInfResourcesProcessing="true" />. - Consider reducing each HTTP endpoint's

tcpOptions maxOpenConnectionsto approximately the expected maximum number of concurrent processing and queued requests that avoids excessive request queuing under stress, and test with a saturation & slow performance test.

- If using the

- If using databases (JDBC):

- Connection

pools generally should not be consistently saturated. Tune

<connectionManager maxPoolSize="X" />. - Consider tuning each connectionManager's

numConnectionsPerThreadLocalandpurgePolicy, and each dataSource'sstatementCacheSizeandisolationLevel. - Consider disabling idle and aged connection timeouts (and tune any firewalls, TCP keep-alive, and/or database connection timeouts, if needed).

- Connection

pools generally should not be consistently saturated. Tune

- If using JMS

MDBs without a message ordering requirement, tune activation

specifications'

maxConcurrencyto control the maximum concurrent MDB invocations andmaxBatchSizeto control message batch delivery size. - If using EJBs:

- If using non-

@Asynchronousremote EJB interfaces in the application for EJBs available within the same JVM, consider using local interface equivalents instead to avoid extra processing, thread usage, and the potential of deadlocks. - If an EJB is only needed to be accessed locally within the same server, then use local interfaces (pass-by-reference) instead of remote interfaces (pass-by-value) which avoids serialization.

- If using non-

- If using security, consider tuning the authentication cache and LDAP sizes.

- Use the minimal feature set needed to run your application to reduce startup time and footprint.

- Upgrade to the latest version and fixpack as there is a history of making performance improvements and fixing issues or regressions over time.

- Consider enabling request timing which will print a warning and stack trace when requests exceed a time threshold.

- Review logs for any errors, warnings, or high volumes of messages.

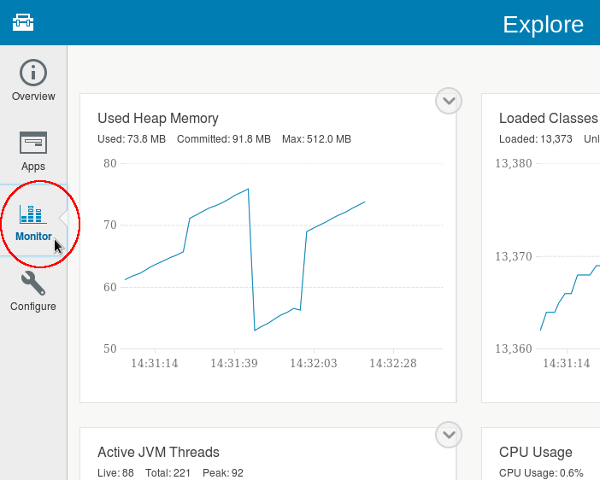

- Monitor, at minimum, response times, number of requests, thread pools, connection pools, and CPU and Java heap usage using mpMetrics-2.3, monitor-1.0, JAX-RS Distributed Tracing, and/or a third party monitoring program.

- Consider enabling event logging which will print a message when request components exceed a time threshold.

- Consider running with a sampling profiler such as Health Center or Mission Control for post-mortem troubleshooting.

- Disable automatic configuration and application update checking if such changes are unexpected.

- If the application writes a lot to messages.log, consider switching to binary logging for improved performance.

- Review the performance tuning topics in the Open Liberty and WebSphere Liberty documentation.

- If running on z/OS:

- Consider enabling SMF 120 records.

- Consider WLM classification: zosWlm-1.0

- Enable hardware cryptography for Java 8 or Java 11 and above

Documentation

Open Liberty is an open source Java application server. WebSphere Liberty is IBM's commercial extension of Open Liberty. Both may be entitled for support.

For feature differences between editions, review the features documentation and/or find the latest quarterly webinar, click the link, open the PDF, and search for "Periodic Table of Liberty".

For those familiar with WebSphere traditional, review Demystifying Liberty for WebSphere administrators and the Liberty information portal.

WebSphere Liberty:

Open Liberty:

Continuous Delivery

If you have longer-term support considerations, consider using Liberty releases ending in .3, .6, .9 or .12.

server.xml

Liberty is configured through a server.xml:

jvm.options

Generic JVM

arguments are set either in

$LIBERTY/usr/servers/$SERVER/jvm.options for a particular

JVM or in $LIBERTY/etc/jvm.options as defaults for all

servers.

Put each option on its own line. The file supports commented lines

that start with #.

Verbose Garbage Collection

Consider enabling Java verbose garbage collection on all JVMs, including production. This will help with performance analysis, OutOfMemoryErrors, and other post-mortem troubleshooting. Benchmarks show an overhead of less than 1% and usually less than 0.5%.

- OpenJ9 or IBM Java

-Xverbosegclog:-Xverbosegclog:logs/verbosegc.%seq.log,20,50000 - HotSpot

-Xloggc:- Java >= 9:

-Xlog:safepoint=info,gc:file=logs/verbosegc.log:time,level,tags:filecount=5,filesize=20M - Java 8:

-Xloggc:logs/verbosegc.log -XX:+UseGCLogFileRotation -XX:NumberOfGCLogFiles=5 -XX:GCLogFileSize=20M -XX:+PrintGCDateStamps -XX:+PrintGCDetails

- Java >= 9:

Monitor for high pause times and that the proportion of time in GC pauses is less than ~5-10% using the GCMV tool.

Logs and Trace

See WebSphere Liberty Logging Documentation and Open Liberty Logging Documentation.

Always provide or analyze both console.log and

messages.log as they may have different sets of messages.

Ideally, when gathering Liberty server logs, gather the entire logs

folder under $LIBERTY/usr/servers/$SERVER/logs, as well as

the server.xml under $LIBERTY/usr/servers/$SERVER/.

Liberty uses a Java retransformation agent to supports some of its logging capabilities. On IBM Java < 7.1, the mere presence of a retransformation agent will cause the VM to double the amount of class native memory used, whether any individual class is transformed or not. On IBM Java >= 7.1, additional memory is allocated on-demand only for the classes that are retransformed.

WAS traditional Cross-Component Trace (XCT) and Request Metrics are not available in Liberty.

messages.log

messages.log includes WAS messages equal to or above the

INFO threshold (non-configurable),

java.util.logging.Logger messages equal to or above

traceSpecification (default *=info),

System.out, and System.err, with

timestamps.

On server startup, any old messages.log is first rotated

(unless the bootstrap property

com.ibm.ws.logging.newLogsOnStart=false is set).

To specify the maximum file size (in MB) and maximum number of historical files:

<logging maxFiles="X" maxFileSize="Y" />For those experienced with WAS traditional, messages.log

is like the combination of SystemOut.log and

SystemErr.log; however, unless the bootstrap property

com.ibm.ws.logging.newLogsOnStart=false is set, unlike WAS

traditional, Liberty doesn't append to the existing

SystemOut.log on restart by default.

console.log

console.log includes native stdout, native

stderr, WAS messages (except trace) equal to or above the

threshold set by consoleLogLevel (by default,

AUDIT), System.out plus

System.err (if copySystemStreams is true,

which it is by default) and without timestamps (unless

consoleFormat is simple). The

console.log is always truncated on server startup when

using $LIBERTY/bin/server start and does not support

maximum size nor rollover.

For those experienced with WAS traditional, by default,

console.log is like the combination of

native_stdout.log, native_stderr.log, and

SystemOut.log and SystemErr.log messages above

AUDIT without timestamps.

If you would like to use console.log for native

stdout and native stderr only and use

messages.log for everything else (note: a couple of AUDIT

messages will still show up before the logging configuration is

read):

<logging copySystemStreams="false" consoleLogLevel="OFF" />Starting with Liberty 20.0.0.5, consoleFormat supports the simple value that adds timestamps. For example:

$ docker run --rm -e "WLP_LOGGING_CONSOLE_FORMAT=simple" -it open-liberty:latest

Launching defaultServer (Open Liberty 20.0.0.6/wlp-1.0.41.cl200620200528-0414) on Eclipse OpenJ9 VM, version 1.8.0_252-b09 (en_US)

[6/24/20 19:21:53:874 GMT] 00000001 com.ibm.ws.kernel.launch.internal.FrameworkManager A CWWKE0001I: The server defaultServer has been launched.Disabling System.out and/or System.err

In general, it's not recommended to fully disable

System.out and/or System.err because some

components in the Java Class Library may have diagnostics that write to

System.out and/or System.err and instead it's

recommended to change the code that's causing excessive writing to

System.out and/or System.err. However, it is

recommended to disable System.out and

System.err to console.log (but leaving output

to messages.log) if duplicated output is not desired and

causes strain.

You may temporarily disable System.out and/or

System.err to messages.log and/or

console.log with the following (e.g. if excessive log

writing is causing a performance issue):

System.outandSystem.errmay be disabled to justconsole.log(leaving output tomessages.log) withcom.ibm.ws.logging.copy.system.streams=falseinbootstrap.properties.System.outand/orSystem.errmay be disabled tomessages.logandconsole.logwith<logging traceSpecification="*=info:SystemOut=off:SystemErr=off"/>inserver.xml.

trace.log

Diagnostic trace is enabled with traceSpecification. For example:

<logging traceSpecification="*=info" maxFileSize="250" maxFiles="4" />For a more compact format, add traceFormat="BASIC".

If trace is enabled, trace.log includes WAS messages,

java.util.logging.Logger messages, WAS diagnostic trace,

System.out, and System.err, with

timestamps.

Sending trace to native stdout:

<logging traceSpecification="*=info" traceFileName="stdout" maxFileSize="0" traceFormat="BASIC" />Request Timing

Consider enabling and tuning request timing which will print a warning and stack trace when requests exceed a time threshold. Even in the case that you use a monitoring product to perform slow request detection, the built-in Liberty detection is still valuable for easy searching, alerting, and providing diagnostics to IBM support cases.

The slowRequestThreshold (designated X

below) is the main configuration and it should be set to your maximum

expected application response time in seconds, based on business

requirements and historical analysis. Make sure to include the

s after the value to specify seconds.

The hungRequestThreshold is an optional configuration

that works similar to slowRequestThreshold, but if it is

exceeded, Liberty will produce three thread

dumps, one minute apart, starting after a request exceeds this

duration.

Performance test the overhead and adjust the thresholds and

sampleRate as needed to achieve acceptable overhead.

<featureManager><feature>requestTiming-1.0</feature></featureManager>

<requestTiming slowRequestThreshold="Xs" hungRequestThreshold="600s" sampleRate="1" />Some

benchmarks have shown that requestTiming has an

overhead of about 3-4% when using a sampleRate of 1:

The requestTiming-1.0 feature, when activated, has been shown to have a 4% adverse effect on the maximum possible application throughput when measured with the DayTrader application. While the effect on your application might be more or less than that, you should be aware that some performance degradation might be noticeable.

As of this writing, requestTiming does not track asynchronous servlet request runnables though a feature request is opened.

Example output:

[10/1/20 13:21:42:235 UTC] 000000df com.ibm.ws.request.timing.manager.SlowRequestManager W TRAS0112W: Request AAAAqqZnfKN_AAAAAAAAAAB has

been running on thread 000000c2 for at least 5000.936ms. The following stack trace shows what this thread is currently running.

at java.lang.Thread.sleep(Native Method) [...]

The following table shows the events that have run during this request.

Duration Operation

5003.810ms + websphere.servlet.service | pd | com.ibm.pd.Sleep?durationms=10000Event Logging

Consider enabling and tuning event logging to log excessively long component times of application work. This is slightly different than request timing. Consider the following case: You've set the requestTiming threshold to 10 seconds which will print a tree of events for any request taking more than 10 seconds. However, what if a request occurs which has three database queries of 1 second, 2 seconds, and 6 seconds. In this case, the total response time is 9 seconds, but the one query that took 6 seconds is presumably concerning, so event logging can granularly monitor for such events.

<featureManager>

<feature>eventLogging-1.0</feature>

</featureManager>

<eventLogging includeTypes="websphere.servlet.service" minDuration="500ms" logMode="exit" sampleRate="1" />Example output:

[10/1/15 14:10:57:962 UTC] 00000053 EventLogging I END requestID=AAABqGA0rs2_AAAAAAAAAAA # eventType=websphere.servlet.service # contextInfo=pd

| com.ibm.pd.Sleep?durationms=10000 # duration=10008.947msBinary Logging

Consider using Binary

Logging. In benchmarks, binaryLogging reduced the

overhead of logs and trace by about 50%. Note that binary logging does

not use less disk space and in fact will use more disk space; the

performance improvements occur for other reasons.

Add the following additional

lines to bootstrap.properties for the server (if no

such file exists, create the file in the same directory as

server.xml):

websphere.log.provider=binaryLogging-1.0

com.ibm.hpel.log.purgeMaxSize=100

com.ibm.hpel.log.outOfSpaceAction=PurgeOld

com.ibm.hpel.trace.purgeMaxSize=2048

com.ibm.hpel.trace.outOfSpaceAction=PurgeOldThread Pools

Most application work in Liberty occurs in a single thread pool named

"Default Executor" (by default). The <executor />

element in server.xml may be used to configure this pool; however,

unless there are observed problems with throughput, it is generally not

recommended to tune nor even specify this element. If maxThreads is not

configured, Liberty dynamically

adjusts the thread pool size between coreThreads and maxThreads

based on observed throughput:

In most environments, configurations, and workloads, the Open Liberty thread pool does not require manual configuration or tuning. The thread pool self-tunes to determine how many threads are needed to provide optimal server throughput. [...] However, in some situations, setting the coreThreads or maxThreads attributes might be necessary. The following sections describe these attributes and provide examples of conditions under which they might need to be manually tuned.

- coreThreads: This attribute specifies the minimum number of threads in the pool. [...]

- maxThreads: This attribute specifies the maximum number of threads in the pool. The default value is -1, which is equal to MAX_INT, or effectively unlimited.

If there is a throughput problem, test with a maximum number of

threads equal to $cpus * 2. If this or another explicit

value is better, consider opening a support case to investigate why the

auto-tuning is not optimal. For example:

<executor maxThreads="64" />Diagnostic trace to investigate potential executor

issues:

com.ibm.ws.threading.internal.ThreadPoolController=all

As of this writing, the default

value of coreThreads is $cpus * 2.

Additional details:

HTTP

If the applications don't use resources in

META-INF/resources directories of embedded JAR files, then

set <webContainer skipMetaInfResourcesProcessing="true" />.

Liberty (starting in 18.0.0.2) uses DirectByteBuffers for HTTP reading and writing just like WAS traditional; however, there is only a global pool rather than ThreadLocal pools, and the DBB sizes and bucket sizes may be configured with, for example:

<bytebuffer poolSizes="32,1024,8192,16384,24576,32768,49152,65536" poolDepths="100,100,100,20,20,20,20,20" />Keep Alive Connections

Max requests per connection

By default, for Liberty < 21.0.0.6, for HTTP/1.0 and HTTP/1.1 (but

not HTTP/2.0), Liberty closes an incoming HTTP keep alive connection after

100 requests (see maxKeepAliveRequests). This may cause

a significant throughput impact, particularly with TLS (in one

benchmark, ~100%). To disable such closure of sockets, set maxKeepAliveRequests="-1":

<httpOptions maxKeepAliveRequests="-1" />This is the default as of Liberty 21.0.0.6.

Idle timeouts

In general, for servers with incoming LAN network traffic

from clients using persistent TCP connection pools (e.g. a reverse proxy

like IHS/httpd or web service client), increase the idle

timeout (see persistTimeout) to avoid connections

getting kicked out of the client connection pool. Try values less than

575 hours. For example:

<httpOptions persistTimeout="575h" />Error codes closing keep-alive connections

If an HTTP response returns what's internally considered an "error

code" (HTTP 400, 402-417, or 500-505; any StatusCodes

instance with the third parameter set to true); then,

after the response completes, if the socket is a keep-alive socket, it

will be closed. This may impact throughput if an application is, for

example, creating a lot of HTTP 500 error responses and thus any servers

with incoming LAN network traffic from clients using persistent

TCP connection pools (e.g. a reverse proxy like IHS/httpd or web service

client) will have to churn through more sockets than otherwise

(particularly impactful for TLS handshakes). As an alternative to

minimizing such responses, test using HTTP/2 instead.

HTTP Access Logs

HTTP

access logging with response times may be used to track the number

and response times of HTTP(S) requests in an NCSA format using

%D (in microseconds). The example also uses

%{R}W which is the time until the first set of bytes is

sent in response (in microseconds) which is often a good approximation

for application response time (as compared to %D which is

end-to-end response time including client and network).

For example:

<httpEndpoint httpPort="9080" httpsPort="9443">

<accessLogging filepath="${server.output.dir}/logs/http_access.log" maxFileSize="100" maxFiles="4" logFormat="%h %u %t "%r" %s %b %D %{R}W" />

</httpEndpoint>Starting with Liberty 21.0.0.11, the %{remote}p

option is available to print the ephemeral local port of the client

which may be used to correlate to network trace:

<httpEndpoint httpPort="9080" httpsPort="9443">

<accessLogging filepath="${server.output.dir}/logs/http_access.log" maxFileSize="100" maxFiles="4" logFormat="%h %u %t "%r" %s %b %D %{R}W %{remote}p %p" />

</httpEndpoint>If you are on older versions of Liberty, ensure you have APARs PI20149 and PI34161.

See also:

HTTP Sessions

Sticky sessions

When using persistent Liberty servers and a load balancer with sticky

JSESSIONID load balancing, consider setting

each server's cloneID explicitly.

Maximum in-memory sessions without session persistence

By default, Liberty sets allowOverflow="true" for HTTP sessions, which means that maxInMemorySessionCount is not considered and HTTP sessions are unbounded which may cause OutOfMemoryErrors in the default configuration without session persistence. If allowOverflow is disabled, maxInMemorySessionCount should be sized taking into account the maximum heap size, the average HTTP session timeout, and the average HTTP session heap usage.

HTTP Session Database Persistence

If using HTTP session database persistence, configure the <httpSessionDatabase />

element along the lines of WAS

traditional tuning guidelines.

If using time-based writes, the writeInterval is the amount of time to wait before writing pending session updates. Note that there is a separate timer which controls the period at which a thread checks for any updates exceeding the writeInterval threshold. If the writeInterval is less than 10 seconds, then the timer is set to the value of the writeInterval; otherwise, the timer is set to a fixed 10 seconds, which means that writes may occur up to 10 seconds after the writeInterval threshold has passed.

HTTP Response Compression

Starting with Liberty 20.0.0.4,

HTTP responses may be automatically compressed (gzip, x-gzip, deflate,

zlib or identity) with the httpEndpoint compression

element. For example, without additional options, the element compresses

text/* and application/javascript response

MIME types:

<httpEndpoint httpPort="9080" httpsPort="9443">

<compression />

</httpEndpoint>This will reduce response body size but it will increase CPU usage.

Web Response Cache

The Web Response Cache configures HTTP response caching using the distributedMap. Example:

<featureManager>

<feature>webCache-1.0</feature>

</featureManager>

<distributedMap id="baseCache" memorySizeInEntries="2000" />The configuration is specified in a cachespec.xml in the application. For details, see ConfigManager and the WAS traditional discussion of cachespec.xml. The response cache uses the baseCache.

HTTP/2

Large request bodies

If an HTTP/2 client is sending large request bodies (e.g. file

uploads), then consider testing additional

tuning in httpOptions under httpEndpoint

available since

23.0.0.4:

limitWindowUpdateFrames: "Specifies whether the server waits until half of the HTTP/2 connection-level and stream-level windows are exhausted before it sends WINDOW_UPDATE frames."settingsInitialWindowSize: "Specifies the initial window size in octets for HTTP/2 stream-level flow control."connectionWindowSize: "Specifies the window size in octets for HTTP/2 connection-level flow control."

Monitoring

Consider enabling, gathering, and analyzing WAS statistics on, at least, thread pools, connection pools, number of requests, average response time, and CPU and Java heap usage. This is useful for performance analysis and troubleshooting. The following options are not mutually exclusive:

- mpMetrics:

Expose statistics through a REST endpoint:

- Enable mpMetrics. For example:

<featureManager> <feature>mpMetrics-4.0</feature> </featureManager> - Gather the data

through the

/metricsREST endpoint through Prometheus or direct requests.

- Enable mpMetrics. For example:

- monitor-1.0:

Expose statistics through Java MXBeans:

- Enable monitor-1.0:

<featureManager> <feature>monitor-1.0</feature> </featureManager> - Enable JMX access through localConnector-1.0 and/or restConnector-2.0

- Gather the data through monitoring products, JConsole, or a Java client (example: libertymon).

- Key

MXBeans are ThreadPoolStats, JvmStats, ServletStats, SessionStats,

and ConnectionPool. If all MXBeans are enabled (as they are by default

if monitor-1.0 is configured), benchmarks show about a 4% overhead. This

may be reduced by limiting the enabled MXBeans; for example:

<monitor filter="ThreadPoolStats,ServletStats,ConnectionPool,..." />

- Enable monitor-1.0:

- JAX-RS

Distributed Tracing for applications with JAX-RS web services:

- Enable mpOpenTracing-1.3:

<featureManager> <feature>mpOpenTracing-1.3</feature> </featureManager> - Connect to Jaeger (easier because no user feature is required) or Zipkin.

- Enable mpOpenTracing-1.3:

mpMetrics

When mpMetrics-x.x (or the convenience

microProfile-x.x feature) is enabled, the

monitor-1.0 feature is implicitly enabled to provide vendor

metrics that include

metrics for thread pools, connection pools, web applications, HTTP

sessions, and JAX-WS. This adds a small performance overhead (especially

the thread pool metrics). If only a subset of these metrics are needed,

reduce the overhead by adding a filter that explicitly configures the

available metrics:

- Only JAX-RS:

<monitor filter="REST" /> - Only some subset of the vendor metrics (pick and choose from the

following list):

<monitor filter="JVM,ThreadPool,WebContainer,Session,ConnectionPool,REST" /> - No JAX-RS nor vendor metrics and instead only

baseandapplicationmetrics:<monitor filter=" "/> <!-- space required -->

Centralized Logging

Consider sending log data to a central service such as Elastic Stack. This is useful for searching many logs at once.

Java Database Connectivity (JDBC)

Review common JDBC tuning, in particular:

- maxPoolSize: The maximum connections to the DB for this pool.

- statementCacheSize: Maximum number of cached prepared statements per connection.

- purgePolicy: Whether to purge all connections when one connection

has a fatal errors. Defaults to

EntirePool. ConsiderFailingConnectionOnly. - numConnectionsPerThreadLocal: Cache DB connections in ThreadLocals on the DefaultExecutor pool. Consider testing with an explicit maxThreads size for the pool.

- isolationLevel: If application semantics allow it, consider reducing the isolationLevel.

Connection pool idle and aged timeouts

For maximum performance, connections in the pool should not time out

due to the idle timeout (maxIdleTime)

nor the aged timeout (agedTimeout).

To accomplish this, modify connectionManager

configuration:

- Set

minPoolSizeto the same value asmaxPoolSizeor setmaxIdleTime="-1", and - Ensure

agedTimeoutis not specified or is set to-1, and - Ensure any intermediate firewalls to the database do not have idle or age timeouts or configure client operating system TCP keep-alive timeouts to below these values, and

- Ensure the database does not have idle or age timeout.

For example:

<connectionManager minPoolSize="50" maxPoolSize="50" reapTime="-1" />The reason to do this is that connection creation and destruction may be expensive (e.g. TLS, authentication, etc.). Besides increased latency, in some cases, this expense may cause a performance tailspin in the database that may make response time spikes worse; for example, something causes an initial database response time spike, incoming load in the clients continues apace, the clients create new connections, and the process of creating new connections causes the database to slow down more than it otherwise would, causing further backups, etc.

The main potential drawback of this approach is that if there is a

firewall between the connection pool and the database, and the firewall

has an idle or age timeout, then the connection may be destroyed and

cause a stale connection exception the next time it's used. This may

fail the request and purge the entire connection pool if

purgePolicy="EntirePool". The main ways to avoid this are

either to configure the firewall idle or age timeouts similar to above,

or tune the TCP keepalive

settings in the client or database operating systems below the

timeouts.

Similarly, some databases may have their own idle or age timeouts. The database should be tuned similarly. For example, IBM DB2 does not have such connection timeouts by default.

Some people use connection pool usage as a proxy of database response time spikes. Instead, monitor database response times in the database or using Liberty's ConnectionPool MXBean and its InUseTime statistic.

Admin Center

The Admin Center is commonly put on port 9443, for example https://localhost:9443/adminCenter/

<featureManager>

<feature>adminCenter-1.0</feature>

</featureManager>

<quickStartSecurity userName="wsadmin" userPassword="wsadmin" />

Sampling Profiler

Consider enabling a sampling profiler, even in production. This does have a cost but provides very rich troubleshooting data on what Java code used most of the CPU, what monitors were contended, and periodic thread information. Benchmarks for Health Center showed an overhead of <2%. Gauge the overhead in a performance test environment.

- OpenJ9 or IBM Java:

- Add the following to

jvm.optionsand restart:-Xhealthcenter:level=headless - After each time the JVM gracefully stops, a

healthcenter*.hcdfile is produced in the current working directory (e.g.$LIBERTY/usr/servers/$SERVER/).

- Add the following to

- HotSpot Mission Control:

- Add the following to

jvm.optionsand restart:-XX:+FlightRecorder -XX:StartFlightRecording=filename=jmcrecording.jfr,settings=profile - After each time the JVM gracefully stops, a

*.jfrfile is produced in the current working directory (e.g.$LIBERTY/usr/servers/$SERVER/).

- Add the following to

Start-up

If a cdi feature is used (check the

CWWKF0012I message) and the applications don't have CDI

annotations in embedded archives, disable such scanning to improve

startup times with <cdi12 enableImplicitBeanArchives="false"/>.

Jandex

Consider creating Jandex index files to pre-compute class and annotation data for application archives.

Startup Timeout Warning

Liberty profile has a fixed timeout of 30 seconds for applications to start. After the 30 second timeout expires two things happen: a message is output to the logs saying the application didn't start quickly enough; during server startup the server will stop waiting for the application to start and claim to be started, even though the application is not yet ready.

To set this value to (for example) one minute add the following to server.xml:

<applicationManager startTimeout="1m"/>

Startup order

Starting with Liberty 20.0.0.6, the application element has an optional startAfter attribute that allows controlling the order of application startup. Example:

<application id="APP1" location="APP1.war"/>

<webApplication id="APP2" location="APP2.war" startAfterRef="APP1"/>

<enterpriseApplication id="APP3" location="APP3.ear"/>

<application id="APP4" location="APP4.ear" startAfterRef="APP2, APP3"/>OSGi Feature Startup Times

Edit

wlp/usr/servers/<serverName>/bootstrap.properties:

osgi.debug=Edit wlp/usr/servers/<serverName>/.options:

org.eclipse.osgi/debug/bundleStartTime=true

org.eclipse.osgi/debug/startlevel=trueExample output:

10 ms for total start time event STARTED - osgi.identity; type="osgi.bundle"; version:Version="1.3.42.202006040801"; osgi.identity="com.ibm.ws.kernel.service" [id=5]

13 ms for total start time event STARTED - osgi.identity; type="osgi.bundle"; version:Version="1.0.42.202006040801"; osgi.identity="com.ibm.ws.org.eclipse.equinox.metatype" [id=6]The bundles have some dependencies so there are a series of start-levels to support the necessary sequencing, but all bundles within a given start-level are started in parallel, not feature-by-feature. You may disable parallel activation to get more accurate times but then you have to figure out all the bundles the feature enables.

Idle CPU

Idle CPU usage may be decreased if dynamic configuration and application updates are not required:

<applicationMonitor dropinsEnabled="false" updateTrigger="disabled"/>

<config updateTrigger="disabled"/>Alternatively, you may still support dynamic updates through MBean triggers that has lower overhead than the default polling:

<applicationMonitor updateTrigger="mbean" pollingRate="999h" />

<config updateTrigger="mbean" monitorInterval="999h" />When the MBean is triggered, the update occurs immediately (i.e. the

pollingRate and monitorInterval values can be

set as high as you like).

Authentication Cache

If using security and the application allows it, consider increasing

the authentication

cache timeout from the default of 10 minutes to a larger value

using, for example, <authCache timeout="30m" />.

If using security and the authentication cache is becoming full,

consider increasing the authentication

cache maxSize from the default of 25000 to a larger value using, for

example, <authCache maxSize="100000" />.

LDAP

If using LDAP, consider increasing various cache

values in <attributesCache />,

<searchResultsCache />, and

<contextPool /> elements. For example:

<ldapRegistry [...]>

<ldapCache>

<attributesCache size="4000" sizeLimit="4000" timeout="2400s" />

<searchResultsCache resultsSizeLimit="4000" size="4000" timeout="2400s" />

</ldapCache>

<contextPool preferredSize="6" />

</ldapRegistry>Web Services

JAX-RS

JAX-RS Client

The JAX-RS 2.0 and 2.1 clients are built on top of Apache CXF. The JAX-RS 3.0 and above clients are built on top of RESTEasy. Upgrading to JAX-RS 3.0 and above may require some application changes.

For applications, it is best to re-use anything that you can. For

example, you should try to re-use Client instances if you

are invoking multiple different endpoints (although other

implementations may not be, the Client implementation in

Liberty is thread safe). If you are invoking the same endpoint, but

possibly with different parameters, then you should re-use the

WebTarget. If you are invoking the same request (same

parameters, cookies, message body, etc.), then you can re-use the

Invocation.Builder. Re-using as much as possible prevents

waste and also improves performance as you are no longer creating new

clients, targets, etc. Make sure to close Response objects

after using them.

Connection and Read Timeouts

Timeouts may be specified via the

com.ibm.ws.jaxrs.client.connection.timeout and

com.ibm.ws.jaxrs.client.receive.timeout properties in

JAX-RS 2.0, via the connectTimeout() and

readTimeout() methods in JAX-RS 2.1, or via

server.xml using the webTarget element and

setting the connectionTimeout and/or

receiveTimeout attributes.

Keep-Alive Connection Pools

The JAX-RS V2 client in synchronous mode uses connection pooling

through the JDK's HttpURLConnection.

In particular, this means that if the server does not respond with a

Keep-Alive response header, then the connection will time-out after 5 seconds

(which is tunable in recent versions of Java).

The JAX-RS V2 client in asynchronous

mode uses connection pooling through Apache HttpClient. This HttpClient may be

tuned with client.setProperty or property

calls such as

org.apache.cxf.transport.http.async.MAX_CONNECTIONS,

org.apache.cxf.transport.http.async.MAX_PER_HOST_CONNECTIONS,

org.apache.cxf.transport.http.async.CONNECTION_TTL, and

org.apache.cxf.transport.http.async.CONNECTION_MAX_IDLE,

although these have high

default values.

It may be possible (although potentially unsupported) to switch from

a JAX-RS V2 synchronous client into an asynchronous client with a

client.setProperty or property call with

use.async.http.conduit=true.

JSP

By default, Liberty compiles JSPs on first access (if a cached compile isn't already available). If you would like to compile all JSPs during server startup instead, use the following (these are re-compiled every startup):

<jspEngine prepareJSPs="0"/>

<webContainer deferServletLoad="false"/>JSF

MyFaces JSF Embedded JAR Search for META-INF/*.faces-config.xml

By default, the Liberty Apache MyFaces JSF implementation searches

JSF-enabled applications for META-INF/*.faces-config.xml

files in all JARs on the application classpath. A CPU profiler might

highlight such tops of stacks of a form such as:

java.util.jar.JarFile$1.nextElement

java.util.jar.JarFile$1.nextElement

org.apache.myfaces.view.facelets.util.Classpath._searchJar

org.apache.myfaces.view.facelets.util.Classpath._searchResource

org.apache.myfaces.view.facelets.util.Classpath.search

[...]FacesConfigResourceProvider.getMetaInfConfigurationResources

[...]When an embedded faces-config.xml file is found, a

message is written to messages.log with a

wsjar: prefix, so this would be a simple way to check if

such embedded resource searches are needed or not. For example:

Reading config : wsjar:file:[...]/installedApps/[...]/[...].ear/lib/bundled.jar!/META-INF/faces-config.xmlIf your applications only use a faces-config.xml within

the application itself and do not depend on embedded

faces-config.xml files within JARs on the application

classpath, then you can disable these searches with org.apache.myfaces.INITIALIZE_SKIP_JAR_FACES_CONFIG_SCAN=true

if on JSF

>= 2.3. This may be set in WEB-INF/web.xml,

META-INF/web-fragment.xml, or globally as a JVM property.

For example, in jvm.options:

-Dorg.apache.myfaces.INITIALIZE_SKIP_JAR_FACES_CONFIG_SCAN=trueIf you want to disable globally using the JVM property but some

applications do require embedded faces-config.xml files,

then use the above property and then enable particular applications in

WEB-INF/web.xml or

META-INF/web-fragment.xml.

EJB

The Liberty EJB implementation is a fork of the Apache Geronimo Yoko ORB.

Yoko Timeouts

-Dyoko.orb.policy.connect_timeout=MILLISECONDS(default -1 which is no timeout)-Dyoko.orb.policy.request_timeout=MILLISECONDS(default -1 which is no timeout)-Dyoko.orb.policy.reply_timeout=MILLISECONDS(default -1 which is no timeout)-Dyoko.orb.policy.timeout=MILLISECONDS: Changeconnect_timeoutandrequest_timeouttogether (default -1 which is no timeout)

Remote Interface Optimization

If an application uses a remote EJB interface and that EJB component

is available within the same JVM, the Yoko ORB that Liberty uses does not have the

optional co-location optimization to automatically use the local

interface (as is done with WAS traditional using its co-location

optimization [different from prefer local]) and this will drive the

processing of the remote EJB on a separate thread. If an EJB is not

@Asynchronous, consider running such EJBs on the same

thread by using

the local interface instead of the remote interface. For optimal

performance, if all calls are local, it is even better to use the local

interface even if the co-location optimization was supported.

Messaging

Liberty provides various forms of JMS messaging clients and connectors.

Activation Specifications

If using JMS MDBs, tune

activation specifications' maxConcurrency to control

the maximum concurrent MDB invocations and maxBatchSize to

control message batch delivery size.

Embedded Messaging Server

The embedded messaging server (wasJmsServer) is similar to the WAS traditional SIB messaging with the following differences:

- There is no messaging bus in Liberty. A single messaging engine can run in each JVM but there is no cluster concept that will present the messaging engines, queues and topics within them as belonging to a single clustered entity.

- There is no high availability fail-over for the messaging engine. The client JVM is defined to access the queues and topics in messaging engines running in specific JVMs running at a specific hostname rather than anywhere in the same cell as in WAS traditional.

- There are other minor differences where Liberty sticks more closely

to the JMS specification. Examples:

- JMSContext.createContext allows a local un-managed transaction to be started in WAS traditional (although this is often an application design error) but this is not allowed in Liberty.

- WAS traditional SIB APIs (com.ibm.websphere.sib) are not provided in Liberty.

The WebSphere Application Server Migration Toolkit helps discover and resolve differences.

Database Persistence

JPA 2.0 and before uses OpenJPA. JPA 2.1 and later uses EclipseLink.

JakartaEE

Classloading

The directory ${shared.config.dir}/lib/global (commonly,

$WLP/usr/shared/lib/global/) is on the global

classpath, unless the

application specifies a classloader element, in which

case a commonLibraryRef of global can be added

to still reference that directory.

The ContainerClassLoader is the super class of the AppClassLoader. If an application has an EAR with one or more WARs, there will be two AppClassLoaders (one for the WAR module that is a child loader of the EAR's loader). If it's just a standalone WAR, then only one AppClassLoader.

The WAR/EAR AppClassLoader is a child of a GatewayClassLoader that represents the access to Liberty's OSGI bundle classloaders. The GW loader only allows class loads that are in API packages (i.e. javax.servlet.* if the servlet feature is enabled, javax.ejb.* if the ejb feature is enabled, etc.).

Each WAR/EAR AppClassLoader also has a child loader called ThreadContextClassLoader that basically has two parents - the associated AppClassLoader and it's own Gateway ClassLoader that can load additional classes from Liberty bundles that are in packages marked for thread-context that allows Liberty to load classes using the thread's context classloader without allowing an application class to directly depend on it.

AppClassLoader will use the Class-Path entry in a MANIFEST.MF on a JAR, but it is not required. The classpath for a WAR is all classes in the WEB-INF/classes directrory plus all of the jar files in WEB-INF/lib - then if there are any private shared libraries associated with the app, then those class entries are added to the classpath too.

Liberty doesn't allow shared library references from WARs within an EAR. The references are only handled at the application scope (whether that's an EAR or a standalone WAR). Therefore, you can't separately reference a library for each WAR like you could in WAS traditional. The alternative is to put the jars from the shared library into the WARs' WEB-INF/lib directories.

JARs placed in ${LIBERTY}/usr/${SERVER}/lib/global

should be accessible by all applications through

Class.forName.

Passing Configuration

Use a jndiEntry in server.xml; for example:

<variable name="myVariable" value="myValue"/> <jndiEntry jndiName="myEntry" value="${myVariable}"/>Then the application can do

new InitialContext().lookup("myEntry");.Note that this may also be used to pass Liberty configuration such as, for example,

${com.ibm.ws.logging.log.directory}for the log directory.

dnf/yum/apt-get repositories

See https://openliberty.io/blog/2020/04/09/microprofile-3-3-open-liberty-20004.html#yum

Security

Links:

Tracing login:

*=info:com.ibm.ws.security.*=all:com.ibm.ws.webcontainer.security.*=all

and search for performJaasLogin Entry and

performJaasLogin Exit

Failed login delays

APAR PH38929

introduced in 21.0.0.10 performs a delay from 0-5 seconds (a different

random number in this range each time) on a failed login. Consider

enabling and reviewing the HTTP access log to find

such delays by checking for delays with an HTTP 401 or 403 response

code. If your original concern was about response times spikes in your

monitoring, then you may consider leaving this behavior as-is, and

removing such 401 or 403 responses from your response time monitoring.

Otherwise, if your security team reviews the failed logins and the

potential of user enumeration attacks and decides it is okay to reduce

or eliminate the delay, this may be done with failedLoginDelayMin

and failedLoginDelayMax, although you should also

continue to monitor for failed login attempts.

Basic Extensions using Liberty Libraries (BELL)

The bell feature allows packaging a ServletContainerInitializer in a jar file with META-INF/services and run for every app that gets deployed.

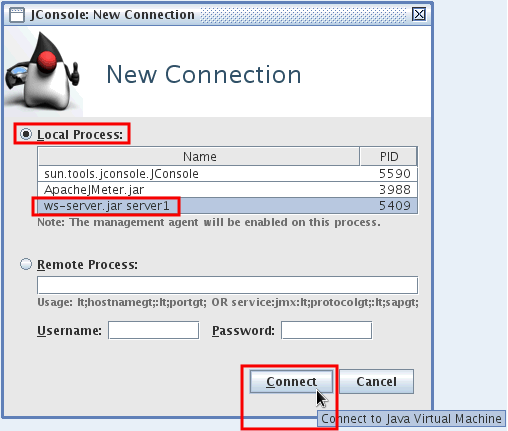

<bell libraryRef="scilib" />JConsole

JConsole is a simple monitoring utility shipped with the JVM.

Note that while JConsole does have some basic capabilities of writing statistics to a CSV, this is limited to a handful of JVM statistics from the main JConsole tabs and is not available for the MXBean data. Therefore, for practical purposes, JConsole is only useful for ad-hoc, live monitoring.

To connect remotely with the restConnector, launch the client JConsole as follows:

jconsole -J-Djava.class.path=$JDK/lib/jconsole.jar:$JDK/lib/tools.jar:$LIBERTY/clients/restConnector.jar -J-Djavax.net.ssl.trustStore=$LIBERTY/usr/servers/server1/resources/security/key.jks -J-Djavax.net.ssl.trustStorePassword=$KEYSTOREPASSWORD -J-Djavax.net.ssl.trustStoreType=jksThen use a URL such as

service:jmx:rest://localhost:9443/IBMJMXConnectorREST and

enter the administrator credentials.

- Start JConsole: WLP/java/{JAVA}/bin/jconsole

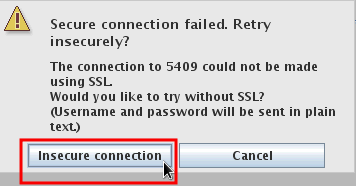

- Choose the JVM to connect to:

- You may be asked to automatically switch to the secure port:

- Review the overview graphs:

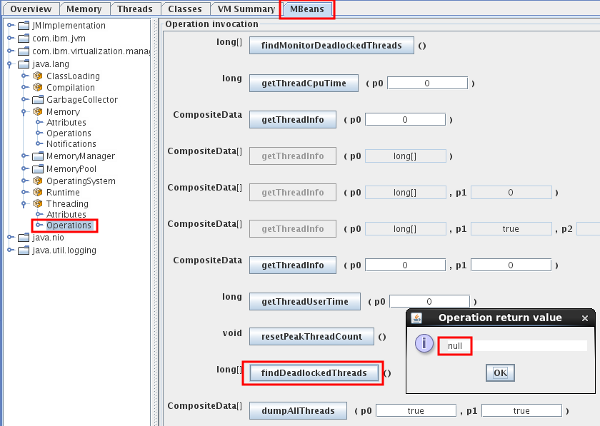

- Click the MBeans tab to review enabled data:

- You may also export some of the data by right clicking and creating

a CSV file:

- You may also execute various MBeans, for example:

Accessing statistics through code:

MBeanServer mbs = ManagementFactory.getPlatformMBeanServer();

ObjectName name = new ObjectName("WebSphere:type=REST_Stats,name=restmetrics/com.example.MyJaxRsService/endpointName");

RestStatsMXBean stats = JMX.newMXBeanProxy(mbs, name, RestStatsMXBean.class);

long requestCount = stats.getRequestCount();

double responseTime = stats.getResponseTime();JConsole with HotSpot

You may need options such as the following for local connections with HotSpot:

-Dcom.sun.management.jmxremote.port=9005

-Dcom.sun.management.jmxremote.authenticate=false

-Dcom.sun.management.jmxremote.ssl=falseFind Release for a Pull Request

- Find the line

[...] merged commit X into OpenLiberty:integration [...]. Take the value ofX. For example, for PR 10332,Xisc7eb966. - Click on the link for that commit and review the tags at the top. For example, commit c7eb966 shows tags starting at 20.0.0.2 which is the first release it's available.

- Alternatively, from the command line, search for tags with that

commit:

git tag --contains X. For example, PR 10332 is available starting in 20.0.0.2:git tag --contains c7eb966 gm-20.0.0.2 gm-20.0.0.3 gm-20.0.0.4 gm-20.0.0.5 gm-20.0.0.6 gm-20.0.0.7 gm-20.0.0.8 gm-20.0.0.9

Java Support

Liberty requires a certain minimum

version of Java. As of this writing, the only feature that requires

a JDK is localConnector-1.0;

all other features only need a JRE.

Quick Testing

If you have Docker installed, you may quickly test some configuration; for example:

$ echo '<server><config updateTrigger="mbean" monitorInterval="60m" /><logging traceSpecification="*=info:com.ibm.ws.config.xml.internal.ConfigFileMonitor=all" traceFormat="BASIC" traceFileName="stdout" maxFileSize="250" /></server>' > configdropin.xml && docker run --rm -v $(pwd)/configdropin.xml:/config/configDropins/overrides/configdropin.xml -it open-liberty:latest | grep -e CWWKF0011I -e "Configuration monitoring is enabled"

[9/14/20 21:16:27:371 GMT] 00000026 ConfigFileMon 3 Configuration monitoring is enabled. Monitoring interval is 3600000

[9/14/20 21:16:31:048 GMT] 0000002a FeatureManage A CWWKF0011I: The defaultServer server is ready to run a smarter planet. The defaultServer server started in 6.851 seconds.

^CFFDC

Liberty will keep up to the last 500 FFDC files. These are generally small files, useful for post-mortem debugging, and don't need to be manually cleaned up.

z/OS

Monitoring on z/OS

WebSphere Liberty provides SMF 120 records for understanding performance aspects of various processes in a Liberty server. Note that there is a small throughput overhead for enabling SMF recording of approximately 3% (your mileage may vary).

- HTTP requests may be monitored with SMF 120 subtype 11 records. These records are enabled by adding the zosRequestLogging-1.0 feature to the server configuration and enabling SMF to capture those records.

- Java batch jobs may be monitored with SMF 120 subtype 12 records. These records are enabled by adding the batchSMFLogging-1.0 feature to the server configuration and enabling SMF to capture those records.

Additional background:

zIIPs/zAAPs

In general, WebSphere Liberty on z/OS is mostly Java so it mostly offloads to zIIPs (other than small bits such as creating WLM enclaves, SAF, writing SMF records, etc.). Even if application processing hands-off to non-zIIP-eligible native code (e.g. third party JNI), recent versions of z/OS (with APAR OA26713) have a lazy-switch design in which short bursts of such native code may stay on the zIIP and not switch to GCPs. For non-zIIP-eligible native code such as the type 2 DB2 driver, some of that may use zAAPs and total processor usage compared to type 4 depends on various factors and may be lower.

IBM Z IntelliMagic Vision for z/OS

To gather detailed IntelliMagic reports for WebSphere Liberty on z/OS:

- Click Reports } WebSphere } Liberty Requests } Requests

- At the top, click Selection and choose the relevant time period of interest

- At the top, click Export

- For "Item to export," select "Focal point: Liberty Requests"

- Select PDF and click Export

- Wait for the "Export Ready" green popup at the bottom right and then click Download

- Perform the same steps as above (including changing to the Focal point) but this time choose CSV instead of PDF

JAXB

JAXB may be used to marshal and unmarshal Java classes to and from XML, most commonly with web service clients or endpoints using JAX-WS such as through the xmlWS, xmlBinding, jaxws, or jaxb features.

If you observe that JAXBContext.newInstance is impacting

performance, consider:

- Package a

jaxb.indexfile for every package that does not contain anObjectFactoryclass. - Consider faster

instantiation performance over faster sustained

unmarshalling/marshalling performance:

- If using Liberty's

xmlWS/xmlBinding:-Dorg.glassfish.jaxb.runtime.v2.runtime.JAXBContextImpl=true - If using Liberty's

jaxws/jaxb:-Dcom.sun.xml.bind.v2.runtime.JAXBContextImpl=true

- If using Liberty's

- If creating a

JAXBContextdirectly, consider using a singleton pattern which is thread safe.

Timed Operations

Timed operations was introduced before requestTiming and is largely superseded by requestTiming, although requestTiming only uses simple thresholds. Unless the more complex response time triggering is interesting, use requestTiming instead.

When enabled, the timed operation feature tracks the duration of JDBC operations running in the application server. In cases where operations take more or less time to execute than expected, the timed operation feature logs a warning. Periodically, the timed operation feature will create a report, in the application server log, detailing which operations took longest to execute. If you run the server dump command, the timed operation feature will generate a report containing information about all operations it has tracked.

To enable timed operations, add the timedOperations-1.0 feature to the server.xml file.

The following example shows a sample logged message:

[3/14/13 14:01:25:960 CDT] 00000025 TimedOperatio W TRAS0080W: Operation websphere.datasource.execute: jdbc/exampleDS:insert into cities values ('myHomeCity', 106769, 'myHomeCountry') took 1.541 ms to complete, which was longer than the expected duration of 0.213 ms based on past observations.

<featureManager>

<feature>timedOperations-1.0</feature>

</featureManager>